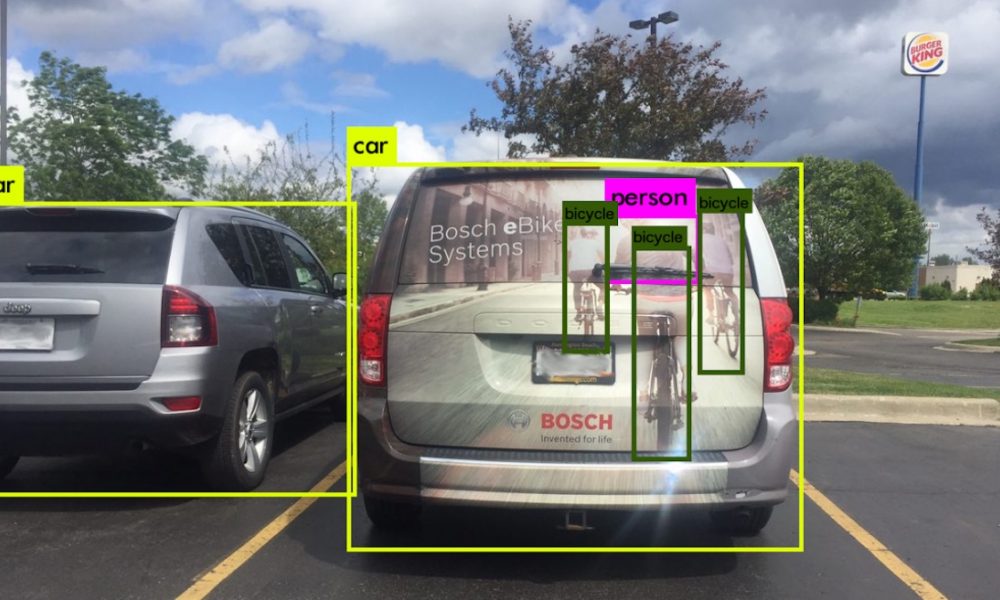

Autonomous vehicles frequently proudly claim to be fitted with an extensive list of sensors, cameras, ultrasound, radar, lidar, you name it. But if you have ever wondered why so many sensors are required, look no farther than this picture. You’re looking at what’s known in the autonomous car industry as an edge case, a situation where a vehicle may have behaved unpredictably because its software processed an unusual scenario differently from the way a human would. In this case, image recognition applications applied to data from a standard camera has been fooled into thinking that images of cyclists on the rear of a van are genuine human cyclists.

This particular spot was identified by investigators in Cognata, a company that builds software simulators extremely detailed and programmable computer games, wherein automakers can test autonomous driving algorithms. That permits them to throw these sorts of edge cases at vehicles till they might work out how to cope with them, without risking an accident. Most autonomous vehicles overcome issues like the baffling image by using various kinds of sensing. Lidar can’t sense glass, radar senses mainly metal, and the camera can be fooled by pictures, explains Danny Atsmon, the Chief executive officer of Cognata. Every one of the sensors utilized in autonomous driving arrives to fix another part of the sensing challenge.

By gradually figuring out which data may be utilized to correctly deal with particular edge cases, in either simulation or in real life, the vehicles May learn to cope with more complicated situations. Tesla was criticized for its decision to use only radar, camera, and ultrasound sensors to provide data for his autopilot system after one of its vehicles failed to discern a truck trailer from a bright sky and ran into it, killing the driver of the Tesla. Critics argue that lidar is a vital component in the sensor mix, it works well in low light and brilliance, unlike a camera, and provides more detailed data than a radar or ultrasound. But as Atsmon points out, even lidar isn’t without its flaws: it cannot tell the distinction between a red and green traffic signal, for example. The safest bet, then, is for automakers to use a range of sensors, to be able to build redundancy in their systems. Cyclists, at least, will thank them for it.

Comments

0 comments